John Doe is a Black, male teenager from North Lawndale. He is in the Chicago Police Department (CPD)’s controversial gang affiliation database. He has a petty rap sheet, with four drug-related arrests in four years. He was recently beaten up, though has never been arrested for a violent crime or gun violence, and has never been shot. There are 240 other “gang affiliated” people in the city of Chicago with similar profiles, who have been the victims of at least one assault recently and have as many or more narcotics arrests as John. But among these people, John Doe stands out— he has been given a perfect score by the CPD’s Strategic Subject List.

The Strategic Subject List is based on an algorithm the CPD uses to predict how likely it is that an individual will be involved in a shooting in the near future, as either shooter or victim. After large raids, the CPD regularly announces how many of the people they arrested were on the List, and last August, CPD Superintendent Eddie Johnson said that the department knows that those on the List “drive violence in the city.” However, there are only 153 people with a perfect score of 500, which marks them at the most extreme possible level of risk for gun violence. So why is John Doe among those people?

We know about John Doe (and the four other individuals with perfect scores who have never been shot or arrested for violent crimes or gun offenses) because the CPD recently released a year-old, anonymized version of the List— its largest step toward transparency in the seven years since the CPD began work on the project. But there are still giant gaps in the information released about the algorithm behind the List, and civil rights organizations like the ACLU of Illinois have expressed concern about what they claim is a disturbing lack of transparency regarding both the algorithm and the potentially harmful ways the scores are actually used by the police department.

There are almost 400,000 people on the List. Being arrested is the only way to get on the List. Every person who has been arrested in the past four years is on the List and has been assigned a score. Given demographic data about people who are arrested by the CPD, the majority of people on the List are Black. According to Miles Wernick, the Illinois Institute of Technology engineering professor who designed the algorithm and continues to test and update it for the CPD, the purpose of the algorithm is not to predict the risk levels of everyone on the List precisely. Rather, it is meant to draw attention to a “very small group of people who are at extreme levels of risk because their recent involvement in crime is so, so high.” He analogizes it to a search engine, one that can comb through massive amounts of information and determine what might be important. “For instance, in a city, you may not realize that a certain person has been shot on several different occasions in the past six months. If you wanted to do something to prevent them from dying, it would be helpful to know who they are,” he said, adding that the scores are meant as “just a way to suggest to [officers and others using the List] what’s the situation out there.”

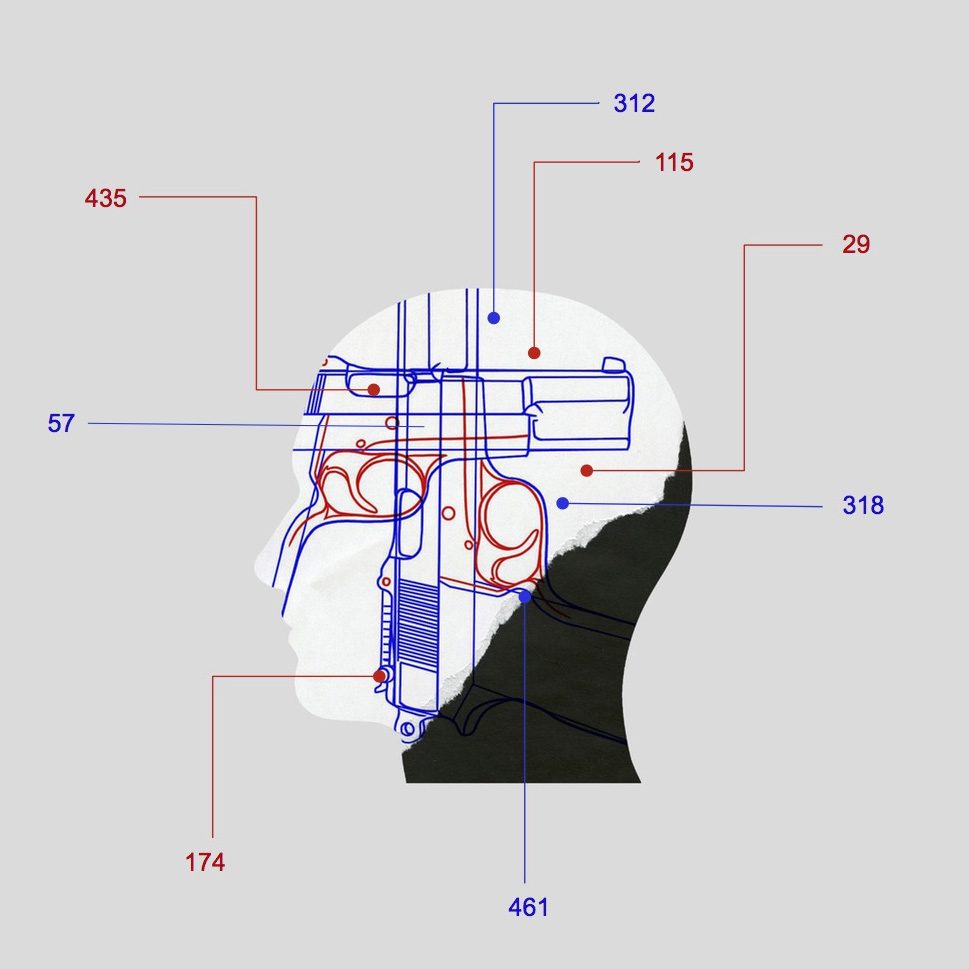

The score is calculated by a two-step process. First, every individual has a risk score derived based on the following seven factors:

- Age at most recent arrest (the younger the age, the higher the score)

- Incidents where victim of a shooting

- Incidents where victim of an assault or battery

- Violent crime arrests

- Unlawful use of weapons arrests

- Narcotics arrests (Wernick claimed that this is the least impactful variable, and does not seem to matter that much to the model)

- Trend in criminal activity (essentially whether or not an individual’s rate of criminal activity is increasing or decreasing)

These categories have changed over time. For instance, gang affiliation has long been one of the factors, but was removed in the most recent iteration as Wernick said he found it had little impact on the score. These are all then weighted so that the more recent an incident is, the more impact it has on the score. Wernick said that individual arrest and victimization patterns from the past few months are what matter most to the score. The algorithm has never explicitly used attributes like race, gender, or location. Wernick also says that he has statistically tested the algorithm for racial bias, and found that it gives people with the same risk the same score, regardless of race—in other words, according to Wernick, it does not have a pattern of overestimating or underestimating the risk level of a given race.

The second step is then to adjust the scores based on people’s associations. More specifically, if two people have been arrested together and one of them has a high score, it can affect the other person’s score. The reasoning behind this, according to Wernick, is that people who regularly commit crimes together are likely to have situations which are more similar than their arrest and victimization records might suggest. For instance, in a case where Person A and Person B have been arrested together a lot, they clearly know each other well and run in similar circles. If Person A has been shot twice and Person B has only been shot once, the first step of the algorithm might think Person A is at substantially higher risk. But Wernick says that the fact that Person A has been shot more than Person B is likely just a matter of chance.

This feature of the algorithm explains why John Doe has a perfect score. When asked to explain that particular case, Wernick looked him up in the full version of the List (as opposed to the spreadsheet version released to the public, which is substantially different), and said that his score was high because a relatively high number of people he had been arrested with had been involved in shootings. So, as it turns out, any attempt to discern why John Doe has a perfect score from the data released is a fool’s errand. His extremely high score was not based on any of the information recently released to the public about him or even primarily on his own actions. Rather, it was based on the second step of creating the scores—adjusting risk according to social networks— which, in fact, is completely absent from the version of the List recently released to the public. Consequently, it has also been absent from recent coverage of the List in major local and national outlets, which has largely attempted to understand the List based only off information published by the CPD without interviewing Wernick, the current algorithm’s creator. Likewise, the data released does not show how each individual’s victimization and arrest histories are distributed over the past four years, which is crucial to how the actual algorithm turns these histories into scores. Wernick said that the algorithm is too complicated to present in something like a spreadsheet, and that it is not clear that all the detailed components that form the List could be explained “to the public in a way that would mean anything.”

Karen Sheley, director of the Police Practices Project at the ACLU of Illinois, finds this knowledge gap disturbing. Sheley is deeply concerned by what she calls a “lack of transparency” both regarding how the List is created, and regarding if and how the List affects police interactions and is used in criminal sentencing. She said that people are possibly under increased surveillance and receiving heavier sentencing “based on a number, and the math behind that number is hidden. That’s not the way we operate as a democracy.”

What then do we know about how the List is used by the police? Jonathan Lewin, who as deputy chief of the CPD’s Bureau of Support Services is in charge of the Strategic Subject List project, said it is never used to guide arrests, and that it is operationalized solely through a program known as Custom Notifications. According to Lewin, the algorithm’s score is just one of several factors that goes into deciding the List of approximately 440 people flagged for Custom Notifications— essentially, a visit from the police. The goal of visiting someone, according to Lewin, is “to try to get them out of the cycle to reduce the chance of victimization.”

A visit has two components. First, Lewin said, notification recipients are offered social services such as job training, G.E.D. training, or addiction assistance. Wernick said that, as he sees it, the next phase in the development of these predictive algorithms is to use them to guide a “fully social services oriented” program that would expand this component of the visit but exclude police involvement.

As it stands, however, there is a second component to the visit. According to Lewin, recipients of Custom Notifications are told that “if you don’t take advantage of these things and you continue to commit weapons offenses, for example, you could be subject to advanced penalties because of your own criminal background. Not because you are on a list—not because of your score—but because of your own criminal activity, which is what the score is based on.”

According to Sheley, there is “no way to tell” if it is true that scores are not having any effect on criminal sentencing, or how effectively or consistently the CPD uses the List to provide access to social services. “We’ve tried to [file a public records request for] information about who gets offered social services and who accepts it, and we’ve really gotten nowhere with the CPD. So if they’re trying to sell this entirely as some kind of social service program, they need to make that information available to the public,” she said. The CPD’s official directive about the Custom Notifications program, available on their website, said that if any individual in the program is arrested, “the highest possible charges will be pursued” for them. Representatives for the Custom Notifications program did not respond to requests for more information from the Weekly.

Lewin also said that the score is included on police officers’ dashboard, which officers use to learn information like whether an individual they have pulled over has a warrant out for their arrest. Sheley says that this raises concerns, as “that kind of labeling is going to impact how the officer interacts with the person. If we’re going to have that kind of consequence, we should have more information about the entire program.” The dashboard does provide some explanation for how the score works, though. While dashboard screenshots obtained by the Weekly through a public records request show that the main tab of the dashboard gives simply the individual’s score alongside other information like height, weight, recent arrests, and—though Lewin and Wernick both told the Weekly it is no longer factored into the score—“gang affiliation,” there is another tab that officers can click on that gives an explanation of how the score is calculated. This explanation consists of what Wernick described as “graphs, essentially, that explain why the model produces the score that it did.” Wernick said that officers should not act just on the basis of the score itself, but rather should use it as a jumping off point to understand why the algorithm gave an individual a certain score.

Sheley is concerned, though, that there is no way to see what information officers are presented with or how they actually use that information. She had similar concerns about Wernick’s claim to have tested the algorithm for racial bias, Lewin’s claim that the scores have no effects on criminal sentencing, and other such claims about how the CPD uses predictive policing: “There’s a lot of ‘trust me’ going on. And we’re talking about criminal consequences for people. It can’t just be that we’re going to blindly trust someone who’s doing that for the government.” She referred to a report that the RAND Corporation, a well-known nonpartisan think tank, published after it was brought in to conduct an outside evaluation of the first version of the List, which was used by the CPD from late 2013 to 2014. The report said that the algorithm was not effective—that “individuals on the [List] are not more or less likely to become a victim of a homicide or shooting than the comparison group”—and, furthermore, that the way the CPD used the scores produced by the algorithm was not effective, giving little guidance regarding what authorities should do with the scores other than to “take action.”

RAND’s evaluation was prior to the inauguration of the Custom Notifications program, and the algorithm itself has changed substantially since that time. However, Sheley said that whenever the Department meets criticism from outside sources about the List, “they say, ‘No, no, that’s the old version. We’re not doing that anymore.’ Well, you were doing it then. And if you’re not being fully transparent, the question is, what are you doing now that a year from now you’re going to be saying, ‘We’re not doing that anymore so it’s okay’?” RAND is currently working on a second evaluation of the current algorithm.

Lewin says that the decisions to withhold information about the List, such as the full list of categories used by the first step of the algorithm (which was not released until this May), have not been without good reason: “We didn’t want people to use the model to assess risk in a way that would allow criminals to target anybody—to put people at risk. We thought that that might happen. We thought that if a criminal gang, for example, could find out the elements of the algorithm and how it worked, then they themselves—keep in mind, our adult arrest records have been public for a long time—might be able to use the model itself or some of the measures that the model uses to determine risk for rival gangs, for example, and to put those people at risk.”

Sheley laughed in response to this: “I’m kind of speechless. I think it was a wise judgement to decide to release the amount of information they have if that was the justification for not releasing it, and I would encourage them to release the rest of it as well.”

Given the perceived public safety risk of releasing information about the algorithm, I asked Lewin to explain the decision to release this information now, and if this meant that the CPD had decided there was no substantial risk after all. Lewin responded that criminal use of the model remains a credible threat, but that they decided to release the List after “a cost-benefit analysis to look at the value of being transparent, which I think is part of the department’s philosophy, in the context of what can happen with the model if it were used in a nefarious way. I think right now with what’s going on with [public records] laws the way they are and with the desire for transparency, it’s a better decision to say, here’s the model. Here’s how it works. Here are the variables…I think [this release] is in keeping with our philosophy of being transparent.”

In fact, journalists and others who have requested the release of the full algorithm and other information about how the List is used have long been denied by the CPD, recently leading the Sun-Times and several independent journalists, including the Invisible Institute’s Jamie Kalven, to file a lawsuit against the CPD contesting this refusal. Lewin’s reference to public records laws is potentially a nod to a ruling issued by Attorney General Lisa Madigan’s office in February, which found that the CPD’s refusal to comply with a separate Sun-Times records request for the List violated the state Freedom of Information Act. The ruling, if not directly forcing the CPD to publish the List, put it on shakier legal standing, and likely influenced its decision to partially release data from the List three months later. Still, the true test of the CPD’s transparency regarding the List may still come—it has yet to file a response in court to the lawsuit demanding the release of the full algorithm.

Pat Sier contributed reporting.

Did you like this article? Support local journalism by donating to South Side Weekly today.